As artificial intelligence (AI) continues to reshape industries, internal audit will play a crucial role in ensuring that innovation is balanced with integrity, accountability and risk awareness. Internal auditors are uniquely positioned to evaluate how organizations govern and deploy AI, helping to safeguard against unintended consequences while enabling responsible innovation.

Understanding the AI Landscape: Traditional AI vs. Generative AI

Traditional AI encompasses rule-based systems and machine learning models designed for specific repetitive tasks. These systems rely on structured data and operate within clearly defined parameters. Internal auditors can use traditional AIs for fraud detection, predictive analytics, transactional data analysis and process automation.

Conversely, generative AI (GenAI) represents a paradigm shift. It can create new content — text, images, code and even music — based on vast datasets and sophisticated neural networks. Tools like ChatGPT, DALL-E and Copilot exemplify this. Internal audit teams are using generative AIs to review documentation, perform risk assessments and even draft audit reports. While this can offer innovation and efficiency, it also introduces new risks that auditors must be prepared to mitigate.

Using AI in Internal Audit

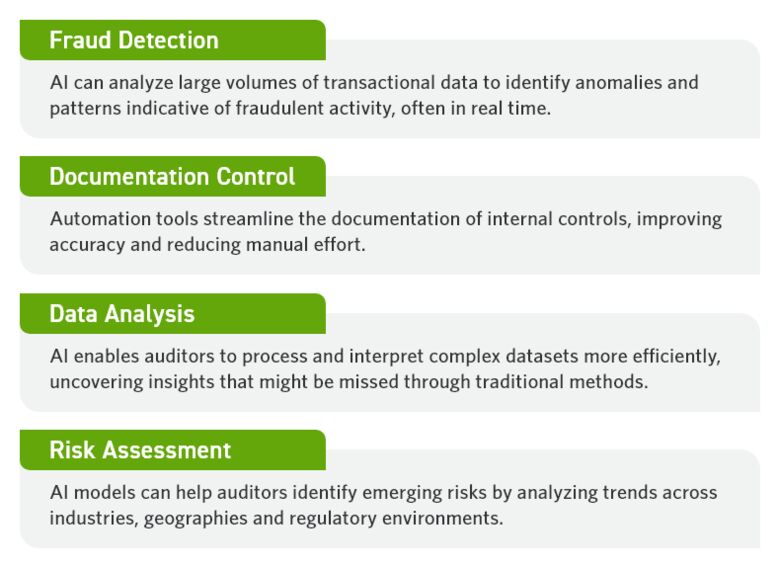

As mentioned above, the incentive of increased efficiency has made many internal audit teams leverage AI tools to enhance their functions. Internal auditors are commonly using AI for:

How To Approach Implementing GenAI for Internal Audit

Before implementing AI tools in internal audit, businesses should create a solid foundation for responsible adoption and use. Taking the following steps can confirm that AI applications align with organizational goals, regulatory requirements and ethical standards.

1. Understanding the Risks of AI in Internal Audit

Despite its benefits, generative AI adoption can come with significant risks. The key concerns that internal auditors must address are:

- Bias and Fairness: AI systems can perpetuate or amplify biases present in training data which can lead to unfair or discriminatory outcomes.

- Hallucinations: Generative AI models may produce plausible sounding but factually incorrect information. This may pose risks in decision-making, reporting and customer interactions.

- Outdated or Incomplete Data: AI models trained on historical data may not reflect current realities, especially in fast-changing industries or regulatory environments.

- Ethical Implications: The use of AI raises questions about transparency, accountability and the ethical boundaries of automation. Internal audit teams are responsible for confirming that organizations uphold ethical standards in AI deployment.

- Overreliance on Generative AI Results: Excessive dependence on AI tools can lead to complacency, where critical thinking and human oversight are diminished.

These risks underscore the need for internal auditors to develop a nuanced understanding of AI technologies and their implications.

2. Building a Robust Governance Framework

Even though generative AI brings several risks to the internal audit function, there are ways to offset the drawbacks. One of the most impactful methods is a comprehensive roadmap for implementing governance frameworks. Key components should include a wide range of areas.

A strong AI governance framework starts with transparency. Organizations must document how AI systems work and clarify data sources, algorithms and limitations. Accountability is also important. Roles for AI oversight should be clearly defined so everyone knows who is responsible for which outcomes.

Business-wide use policies are essential. Document the boundaries for automation, what tasks require human oversight and list examples of acceptable use cases. Effective governance additionally requires regular reviews to keep AI systems accurate and aligned with objectives. Most importantly, a successful framework depends on engaging stakeholders across IT, legal, compliance and other business units for complete oversight.

Remember, in-depth frameworks not only support regulatory compliance. They also foster a culture of responsible innovation and risk-aware decision-making.

3. Defining the Scope of an AI Internal Audit

As organizations increasingly adopt AI technologies, internal auditors play a critical role in not only using AI, but also evaluating the integrity, transparency and accountability of these systems. There are several critical areas to include in the scope of an AI audit.

Governance and Oversight

Legacy IT policies, procedures and controls simply don’t address the new risks introduced by GenAI. Rather than making tweaks and adjustments to existing IT governance and oversight, internal audit should make sure AI governance is purpose-built from the ground up and is based on recognized authoritative sources such as NIST and the EU AI Act.

Training Data Evaluation

Auditors should assess the quality, diversity and relevance of the data used to train AI models. This includes checking for biases, outdated information and data integrity.

Cybersecurity and Data Privacy

AI systems must be protected against threats such as data breaches, model manipulation and adversarial attacks. Auditors should evaluate encryption, access controls and incident response plans.

Model Performance and Accuracy

Internal audit should review how AI models are tested, validated, and monitored for accuracy and reliability over time.

User Behavior and Access Controls

Understanding how employees interact with AI tools can reveal potential misuse, gaps in training or unintended consequences. Role-based access and usage logs are key audit points.

Third-party Risk Management

Many organizations rely on external vendors for AI solutions. Auditors must assess vendor risk, contractual obligations and compliance with data protection standards.

The Future of Internal Audit in an AI-powered World

The future of internal audit is not just about controls and compliance, but guiding organizations through technological transformation with confidence and clarity. AI is no longer confined to traditional compliance and control testing, and auditors are becoming strategic partners in technology oversight. This shift requires new internal audit skills and mindsets, including:

- Data Literacy: Auditors must be comfortable working with data, understanding statistical models and interpreting AI outputs.

- Ethical Reasoning: As AI raises complex ethical questions, auditors must be equipped to evaluate decisions through a moral and societal lens.

- Technology Fluency: Familiarity with AI tools, platforms, and development processes is essential for effective auditing and advisory.

- Agility and Innovation: Internal audit must remain agile, continuously adapting to emerging technologies and evolving risk landscapes.

Internal audit has a unique opportunity to shape how organizations adopt and govern AI. By embracing this role proactively, auditors can help their organizations innovate safely, ethically and sustainably.

Your Guide Forward

Now is the time to elevate your internal audit function. By combining deep industry knowledge and technology experience, we can help you assess AI risks, implement governance frameworks and unlock the full potential of AI-driven audit capabilities.

Guided by our Risk Advisory professionals, Cherry Bekaert’s Internal Audit Services will help you navigate the evolving AI landscape with confidence and clarity.

Contact Cherry Bekaert to proactively manage AI risks, strengthen governance and transform your audit capabilities into a forward-looking, value-driving force. Start your journey toward smarter, more strategic assurance.

Related Insights

- Podcast: Auditing AI: The Role of Internal Audit

- Podcast: The Evolving Role of Internal Audit: Unpacking IIA’s Vision 2035 Report

- Article: New IIA Standards: 2025 Internal Audit Changes

- Article: Preparing for an AI-enabled Future: A Guide to AI Opportunities for Finance

- Article: Navigating the Risks of AI in Professional Services